Most companies in 2026 do not have an AI policy. The ones that do have one generic template pulled off the internet that does not mention their industry, their jurisdiction, or how they actually use AI. The auditor sees this. The customer security questionnaire sees this. We built a free tool that does better.

If you are a CISO, Head of GRC, or Head of Compliance, the AI policy gap on your org is probably one of the top three findings on your next audit. The KonaSense AI Policy Generator gives you a tailored starting draft for the five AI policies that matter most in 2026, in the time it takes to fill in a short form. Try it now and read on for what it produces and why each piece is there.

The five policies every organization needs in 2026

If you have read SOC 2 questionnaires, vendor security reviews, or the AI-specific addenda customers are starting to send during procurement in 2026, the same five policies show up over and over. Together they form the minimum coherent set. Missing any one of them is a finding.

| Policy | What it covers | Why an auditor or customer asks for it |

|---|---|---|

| AI Acceptable Use | What employees and contractors can and cannot do with AI tools, by role, data class, and use case. | The single most common AI question on a 2026 vendor security questionnaire. Without it, every other AI control is hanging in the air. |

| AI Data Handling and DLP | How data is classified, redacted, retained, and protected when it touches an AI system. | Required to demonstrate that confidential and regulated data is not being sent to consumer AI tools by mistake. |

| Prompt and Output Review | Defenses against prompt injection, output sanitization, and the human-in-the-loop bar for high-impact AI actions. | Maps directly to the controls the EU AI Act, NIST AI RMF, and SOC 2 examiners are now asking about for production AI systems. |

| Shadow AI Discovery | How the organization finds, inventories, and risk-scores the AI tools personnel use without approval. | The honest answer to the question "do you have an inventory of every AI tool in production?" requires this. |

| AI Incident Response | How the organization detects, triages, and recovers from AI incidents, including agent compromise and data leakage via AI. | The general Incident Response Plan is not sufficient. AI incidents have categories, severity definitions, and notification timelines that the standard plan does not cover. |

The free generator produces a draft of all five, tailored to your inputs, in a single pack you can download as a PDF or copy as Markdown.

Why generic templates fail

A generic AI policy template fails in three predictable ways, and they are exactly the three things an auditor will probe.

The generator asks you for these three things up front, plus the compliance frameworks you are aligned to, and writes the resulting policies against your specific combination. The output is not the same for every user.

What the tool actually produces

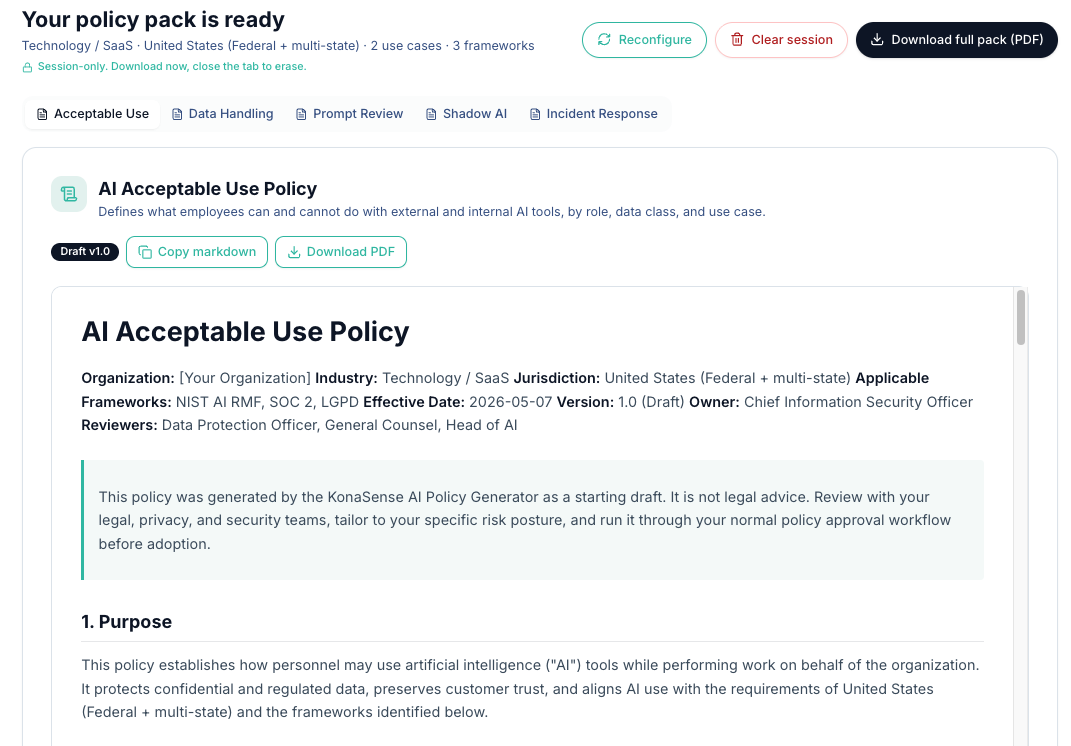

You answer five questions: industry, primary jurisdiction, company name (optional), AI use cases (multi-select), and applicable compliance frameworks (multi-select). Frameworks you can select today include NIST AI RMF, ISO/IEC 42001, SOC 2, ISO 27001, HIPAA, PCI DSS, GDPR, EU AI Act, LGPD, and NIST CSF.

The tool then produces a 24-page draft pack with all five policies. Each policy includes:

- A clearly labeled draft version with effective date, owner, and reviewers

- Sections tailored to your jurisdiction (specific statutes and obligations called out by name)

- Sections tailored to your industry (the risk profile and control language that fits your sector)

- Use-case-specific control sections for the AI use cases you selected (for example, coding assistants get a different control set than customer support agents)

- A framework alignment table mapping the policy to each compliance framework you selected

- Cross-references to related policies in the pack

- Standard sections for definitions, scope, roles, exception process, training, monitoring, enforcement, and revision history

The pack is generated as a starting draft. Every policy includes a clearly stated disclaimer that it is not legal advice and must be reviewed by your legal, privacy, and security teams and run through your normal policy approval workflow before adoption. We say this because it is true, and because anyone telling you otherwise about a generated AI policy is selling you something else.

What the tool is not

To save you time, here is what the tool is explicitly not:

- It is not a substitute for legal review. Legal nuance, contractual carve-outs, and your specific risk posture are things only your lawyers can address. The generator gets you to a credible v0.9 draft in minutes. The remaining work is real and it is yours.

- It is not a compliance certification. Having a written AI policy is necessary for SOC 2, ISO 42001, EU AI Act readiness, and most enterprise security questionnaires. It is not sufficient on its own. You also need the controls, the evidence, and the operational discipline behind the policy.

- It is not the same policy for every user. The output is shaped by your inputs. Two organizations that submit different industries or different use cases will receive meaningfully different drafts.

- It is not a replacement for the rest of your policy library. The pack covers AI-specific policies. It assumes you already have, or will have, a Data Classification Policy, an Information Security Policy, an Incident Response Plan, and the other foundational documents the AI policies cross-reference.

Who should use this

| Role | What you get out of it |

|---|---|

| CISO or Head of Security | A defensible answer to the AI section of your next audit and your next customer security questionnaire, in hours instead of weeks. |

| Head of GRC or Compliance | A starting draft that already maps to NIST AI RMF, SOC 2, ISO 42001, EU AI Act, LGPD, and the other frameworks you are pursuing, with framework alignment tables included. |

| Head of Engineering or Platform | A coherent policy for AI coding assistants and agentic tooling that your developers can actually follow, including sanctioned-tool registries and the human-review bar for AI-generated changes to auth, crypto, and payment flows. |

| Founder or operator | The fastest path from "we have no AI policy" to "we have a credible draft AI policy pack" so your sales team can answer questionnaires without losing the deal. |

Why we built this

KonaSense is an Agent Control Plane. We sell the security, governance, and observability layer that enforces AI policy at the moment of action: at the browser, the IDE, the CLI, and the agentic pipeline. Selling enforcement to an org that has no written policy is the wrong order of operations. The policy comes first.

We kept seeing the same conversation in customer calls. The CISO knows they need an AI policy. They have a draft sitting in a Google Doc that someone started six months ago and abandoned. They have an auditor or a major customer asking for it now. They do not have time to start from scratch and they do not trust a free template from a vendor whose business model is to sell them something else later.

We built the generator because the bar should be higher than "free template" and lower than "law firm engagement." A starting draft, tailored to your context, that you can walk into a legal review with. That is what the tool produces.

If you also want the layer that enforces the policy after you adopt it, that is the rest of what KonaSense does. But the tool is free, and using it does not commit you to anything else.

Generate your pack

Open the AI Policy Generator, answer five questions, and download your pack. If you want to talk to us about what comes after the policy, book a 30-minute walkthrough and we will show you what enforcement at the action layer looks like in practice.